ActiveSupport#descendants can be slow. In a bigger system with layers of descendants, finding all of them can be time-consuming:

puts Benchmark.measure do

100.times { Dispatchers::Base.descendants }

end

# 5.235370 0.015754 5.251124 ( 5.251069)In the code I've been working on, it meant that a single lookup was taking around 50ms. That is a lot, especially if used extensively.

To mitigate this, I've implemented a simple caching layer on top of the lookup that would make things fast:

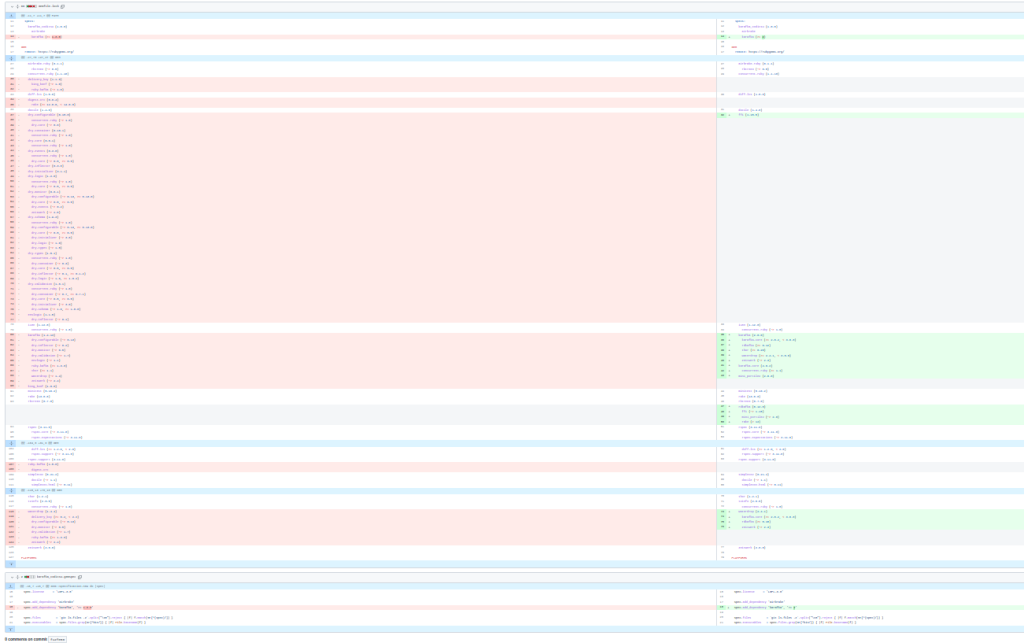

module Mixins

module CachedDescendants

extend ActiveSupport::Concern

cattr_accessor :descendants_map

self.descendants_map = Concurrent::Hash.new

class << self

# Clears the descendants map cache - can be hooked to Rails reloader

def reload!

descendants_map.clear

end

end

included do

class << self

# @return [Array<Class>] array with descendants classes

def cached_descendants

::Mixins::CachedDescendants.descendants_map[self] ||= descendants

end

end

end

end

endWhen included and used, it would give great results:

puts Benchmark.measure do

100.times { Dispatchers::Base.cached_descendants }

end

# 0.000023 0.000001 0.000024 ( 0.000024)99,99956% faster!

Such code, like any other, deserves to be tested. I wrote some specs for it, including a relatively simple one:

context 'when there are two independent bases' do

let(:base1) do

Class.new do

include ::Mixins::CachedDescendants

end

end

let(:base2) do

Class.new do

include ::Mixins::CachedDescendants

end

end

before do

Array.new(5) { Class.new(base1) }

Array.new(5) { Class.new(base2) }

end

it 'expect for them not to interact' do

expect(base1.cached_descendants.size).to eq(5)

expect(base2.cached_descendants.size).to eq(5)

expect(base1.cached_descendants & base2.cached_descendants).to be_empty

end

endIt would just ensure that the way we cache does not create collisions for independent descendants trees.

But once in a while, this code would randomly fail:

1) Mixins::CachedDescendants when there are two independent bases expect for them not to interact

Failure/Error: expect(base1.cached_descendants.size).to eq(4)

expected: 5

got: 4

(compared using ==)How can I create five classes and suddenly have only 4? I initially thought something was wrong with the descendants lookup for anonymous classes. However, this functionality is heavily used by many, including me, and it never created any problems. On top of that, why would it fail only once in a while?

When something fails randomly, it usually means that there's an external factor to it. One that operates under the hood. It wasn't different in this case.

After some investigation, I was able to reproduce it:

GC.disable

puts "Total classes before: #{ObjectSpace.count_objects[:T_CLASS]}"

puts "String subclasses count before: #{String.subclasses.count}"

100.times { Class.new(String) }

puts "Total classes after defining: #{ObjectSpace.count_objects[:T_CLASS]}"

puts "String subclasses count after defining: #{String.subclasses.count}"

GC.enable

GC.start

puts "Total classes after GC: #{ObjectSpace.count_objects[:T_CLASS]}"

puts "String subclasses count after GC: #{String.subclasses.count}"

# Total classes after defining: 1324

# String subclasses count after defining: 102

# Running GC...

# Total classes after GC: 1124

# String subclasses count after GC: 2Boom! Anonymous classes are being garbage collected! Classes that are not referenced anywhere are subject to garbage collection like other objects, and this code was not memoizing them:

before do

Array.new(5) { Class.new(base1) }

Array.new(5) { Class.new(base2) }

endHence, the spec would fail if GC kicked in exactly between the classes definitions and the spec execution. This is why it would only fail once in a while.

Fixing such an issue required only minimal changes to the spec:

let(:descendants) do

# This needs to be memorized, otherwise Ruby GC may remove those in between assertions

[

Array.new(5) { Class.new(base1) },

Array.new(5) { Class.new(base2) }

]

end

before { descendants }That way, the anonymous classes would be referenced throughout the lifetime of this spec.

Summary (TL;DR)

Anonymous classes and modules are a subject of garbage collection like any other object. Unless you reference them, they may be gone before wanting to use them via #descendants or similar lookups. Always reference them in some way or face the unexpected.

Afterword

It was pointed to me by Jean Boussier, that there's an ActiveSupport::DescendantsTracker that can also be used to improve the lookup performance and that Ruby 3.1 has a #subclasses that also is much faster than iterating over ObjectSpace. While their performance is several times faster than the "old" lookup, it is still a magnitude slower than caching the descendants in dev but has similar performance in production.

0.002020 vs. 0.000023 in dev and 0.000039 vs. 0.000042 in production.

The best code is no code, so now I can deprecate my code. The strong class references, however, are still valid and worth keeping in mind.

Cover photo by Alper Orus on Attribution-NonCommercial-ShareAlike 2.0 Generic (CC BY-NC-SA 2.0). Image has been cropped.